macOS Big Sur Beta by Apple is Out! What’s New for Developers on the New macOS SDK

Author : John Prabhu 13th Jul 2020

Apple announced their successor to macOS Catalina called Big Sur. Big Sur is Apple’s latest operating system for the Mac line of products.

macOS Big Sur’s name is inspired by the place Big Sur located in California’s Central Coast region between Carmel and San Simeon. Apple claims, macOS Big Sur brings a whole new set of fantastic features for app developers.

In this new macOS release, you will be looking at the new WidgetKit framework for developing and making them available across iOSiOSiOS is an operating system exclusively created and developed by Apple Inc. for its hardware. It is focused on powering the mobile devices of Apple such as the iPhone, iPad, and iPod Touch. and iPadOS platforms as well. Apple has made some significant changes to the UIUIThe user interface, in the industrial design field of human-computer interaction, is the space where interactions between humans and machines occur. as well in this release. Apart from that, with the new Safari extensions, you can build extensions that can read and analyze webpages and interact with native apps as well. Furthermore, you can convert your iPad app to macOS app with the help of new frameworks available in the Big Sur release. Let’s check them all in the coming sections.

New Features Included in macOS Big Sur

1. WidgetKit

As said earlier, the WidgetKit framework helps you build consistent widgets that work fine across iOS, iPadOS, and macOS. You also get access to a new widget APIAPIAn application program interface (API) is a set of routines, protocols, and tools for building software applications. Basically, an API specifies how software components should interact. Additionally, APIs are used when programming graphical user interface (GUI) components. for SwiftUI and give power to your users in terms of resizing your widgets according to their likes.

Source: apple.com

Along with this, the Big Sur also offers a widget gallery wherein users can search and customize the widget sizes and place them on the home screen. An additional feature included in this release is Smart Stacks. It analyzes the widgets based on activity, location, and time using on-device intelligence, and displays the right widget that might need your users’ attention.

2. Safari Extensions

With Safari Extensions, you can further improve the browsing experience of your users across macOS, iOS, and iPadOS. With the help of HTMLHTMLHypertext Markup Language is the standard markup language for documents designed to be displayed in a web browser. It can be assisted by technologies such as Cascading Style Sheets and scripting languages such as JavaScript., CSSCSSCascading Style Sheets is a style sheet language used for describing the presentation of a document written in a markup language like HTML. CSS is a cornerstone technology of the World Wide Web, alongside HTML and JavaScript., and JavaScriptJavaScriptJavaScript, often abbreviated as JS, is a high-level, interpreted scripting language that conforms to the ECMAScript specification. JavaScript has curly-bracket syntax, dynamic typing, prototype-based object-orientation, and first-class functions. or using native APIs and frameworks, you can build a Safari Extension in Xcode. Also, Xcode 12 now supports WebExtension API and comes with porting tools out of the box to bring your extensions to Safari. For distribution, you can leverage App Store and distribute your Safari Extensions via the extensions category. If you want to distribute them outside the Mac App Store, all you need is a notarization.

Source: apple.com

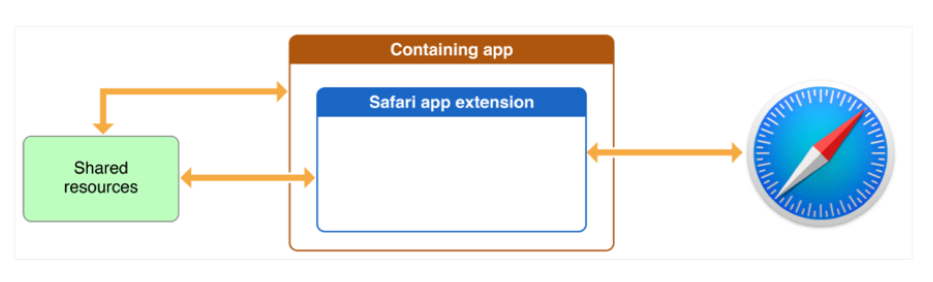

Apple claims that with the help of Safari Extensions, you can read and modify webpage content and also interact with native apps. Hence, sharing data between an app and Safari lets you integrate app content into Safari or send web data back to the app, enabling a unified experience for a web version and native version of an app.

Furthermore, Legacy Safari Extensions (.safariextz files) built with Safari Extension Builder are not supported in Safari 13 on macOS Catalina, macOS Mojave, or macOS High Sierra. The Safari Extensions Gallery for legacy extensions will no longer be available in September 2019. Users on macOS High Sierra or later can find extensions on the Mac App Store by choosing Safari Extensions from the Safari menu. If you distribute legacy extensions built with Safari Extension Builder, it is high time you convert them to the new Safari App Extension format, test on the latest version of Safari 13, and submit them to the Mac App Store.

Consult with our team and upgrade your legacy Safari Extensions

2.1 Content Blockers

You can also build Content Blockers using Xcode that lets you define certain conditions to Safari so that any content that meets the conditions gets blocked by Safari. When it comes to blocking, there are 3 different methods: hiding elements, stripping cookies from Safari requests, and blocking loads.

Source: apple.com

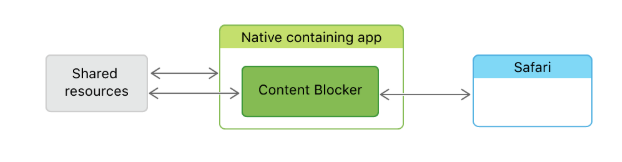

With the help of a containing app, you deliver a content blocker on the App Store. The containing app defines the context provided to the extension and initiates the extension life cycle by sending a request in response to a user action. You have to note that these extensions help improve the browsing experience and not ruin it. Hence, when the Content Blocker is launched, it communicates with its containing app through a set of shared resources, and it communicates directly with Safari.

The best part here is Xcode compiles the Content Blockers into bytecodes to avoid bad user experience. Apps tell Safari in advance what kinds of content to block and Safari doesn’t have to consult with the app during loading. Most importantly, Content Blockers do not collect users’ history or the websites they visit and thus, ensuring data privacy.

3. Mac Catalyst

The apps you build for macOS with the help of Mac Catalyst lets you share code with iPadOS and also enable you to add certain features only for Mac. When you enable Mac support, Xcode automatically excludes incompatible frameworks and embedded content where possible for Mac builds of your project. As a result, the Mac and iPad apps share the same project and source code and ease the scope of upgrade.

When you enable Mac support, Xcode adds the “App Sandbox Entitlement” to your project. Xcode only includes this entitlement in the Mac version of your app, and not for the iOS version. Hence, you can add new features or functionalities only to your apps for macOS and not reflect on other OS by Apple. Also, in the Big Sur release, the macOS apps scale themselves to fit the resolution of iPadOS without any responsive issues.

With the ClassKit framework, Mac apps can track assignments and share progress with teachers and students. Using the HomeKit framework, home automation apps can run alongside the Home app on Mac. Also, the existing frameworks such as Contacts, Core Audio, Accounts, MediaPlayer, GameKit, PassKit, and StoreKit are getting new updates with the release of macOS Big Sur.

4. Machine Learning

4.1 Core ML

The new update in Core ML ensures effective usage of the Apple hardware and minimized power consumption and memory footprint. The highlights of Core ML are:

- The models run only on the user’s device without any need for network connections, retain the responsiveness of your app and ensure data privacy.

- Models from libraries such as PyTorch or TensorFlow can be converted to Core ML using Core ML Converters

- It supports the latest models, such as neural networks that help in understanding images, video, sound, and other rich media.

- To keep the models relevant to user behavior without affecting data privacy, models bundled in apps can be updated with the on-device user data.

- With the help of Core ML Model Deployment, you can easily distribute models to your app using CloudKit.

- Xcode supports model encryption enabling additional security for your machine learning models.

4.2 Machine Learning APIs

With the help of machine learning APIs, you can enable features such as object detection in images and video, language analysis, and sound classification in your app with only a few lines of codes.

4.2.1 Vision

Using this, you can recognize texts, barcode, face and face landmark, image registration, and feature tracking. Also, it allows you to build custom Core ML models for classification or object detection.

4.2.2 Natural Language

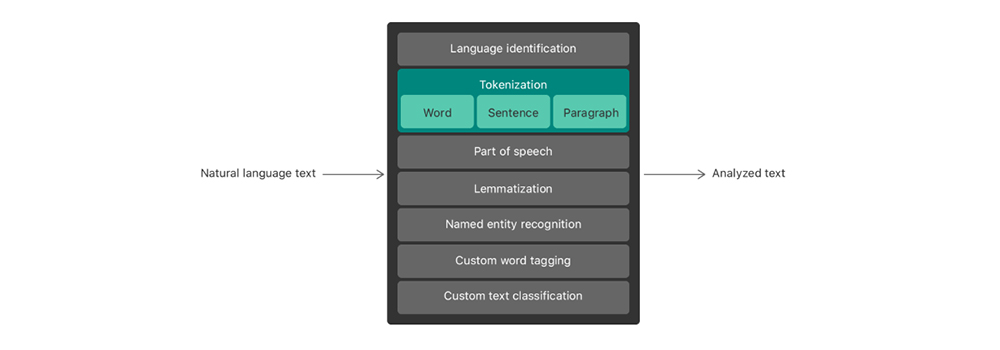

Using this, you can segment natural language texts into sentences, paragraphs, or words, and tag information about these segments, such as part of speech, lemma, language, lexical class, and script.

Source: apple.com

Language identification: automatically detects the language of a piece of text.

Tokenization: breaking up a piece of text into linguistic units or tokens.

Parts-of-speech tagging: marking up individual words with their part of speech.

Lemmatization: deducing a word’s stem based on its morphological analysis.

Named entity recognition: identifying tokens as names of people, places, or organizations.

4.2.3 Speech

Using this, you can recognize spoken words in live or recorded audio. The keyboard’s dictation support uses speech recognition to transcribe audio content into text. For instance, you can use speech recognition to detect verbal commands or handle text dictation in your app.

You can perform speech recognition in multiple languages, but each “SFSpeechRecognizer” object operates on one language at a time. On-device speech recognition is available only for some languages, but the framework also relies on Apple’s servers for speech recognition. Hence, to perform speech recognition, you require a network connection.

4.2.4 SoundAnalysis

This framework performs the analysis using a Core ML model trained using “MLSoundClassifier”. With the help of this framework, you can analyze streamed or file-based audio and add audio recognition capabilities to your app. As a result, you can analyze audio and recognize it as a particular type, such as laughter or applause.

4.3 Create ML

Create ML helps with training the models created using Core ML. Here are some of its features:

- It lets you create and train on-device Core ML models.

- It allows you to train multiple models using different datasets.

- It lets you pause, save, resume, and extend your training process.

- It allows you to preview your model performance using Continuity with your iPhone camera and microphone on your Mac, or drop in sample data.

- It lets you quickly train models on your Mac and thus, lets you take advantage of CPU and GPU.

- Also, it allows you to use an external GPU with your Mac for further enhanced model training performance.

Create ML offers a variety of models such as Image, Video, Sound, Text, Motion and Tabular. You can select a model type from this and add your data and parameters to start training. With Big Sur, the Image model gets a new “Style transfer” feature and the Video model gets “Action classification” and “Style Transfer” features.

We, at TechAffinity, always make sure our customers and their apps are future-proof. So, you don’t have to worry about the constant changes made by Apple. We do all the heavy lifting while you concentrate on your business goals.

Subscribe to our blogs for the latest updates and trends in the software and app development industry. Email: media@techaffinity.com